adamax_update.hpp File Reference

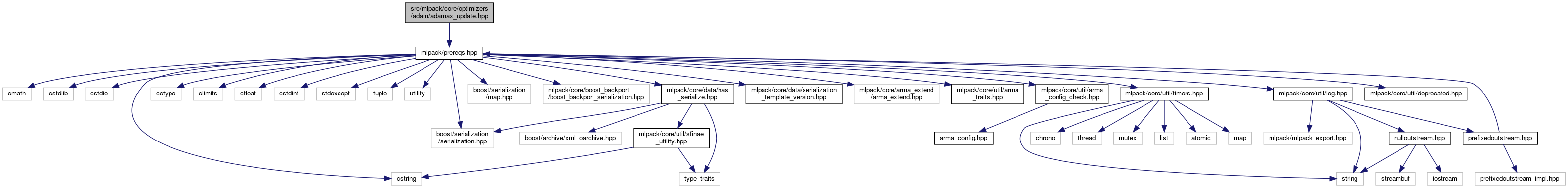

Include dependency graph for adamax_update.hpp:

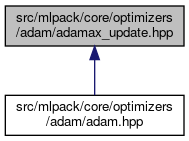

This graph shows which files directly or indirectly include this file:

Go to the source code of this file.

Classes | |

| class | AdaMaxUpdate |

| AdaMax is a variant of Adam, an optimizer that computes individual adaptive learning rates for different parameters from estimates of first and second moments of the gradients.based on the infinity norm as given in the section 7 of the following paper. More... | |

Namespaces | |

| mlpack | |

.hpp | |

| mlpack::optimization | |

Detailed Description

AdaMax update rule. Adam is an an algorithm for first-order gradient- -based optimization of stochastic objective functions, based on adaptive estimates of lower-order moments. AdaMax is simply a variant of Adam based on the infinity norm.

mlpack is free software; you may redistribute it and/or modify it under the terms of the 3-clause BSD license. You should have received a copy of the 3-clause BSD license along with mlpack. If not, see http://www.opensource.org/licenses/BSD-3-Clause for more information.

Definition in file adamax_update.hpp.