Go to the source code of this file.

Classes | |

| class | AdamType< UpdateRule > |

| Adam is an optimizer that computes individual adaptive learning rates for different parameters from estimates of first and second moments of the gradients. More... | |

Namespaces | |

| mlpack | |

.hpp | |

| mlpack::optimization | |

Typedefs | |

| using | Adam = AdamType< AdamUpdate > |

| using | AdaMax = AdamType< AdaMaxUpdate > |

| using | AMSGrad = AdamType< AMSGradUpdate > |

| using | Nadam = AdamType< NadamUpdate > |

| using | NadaMax = AdamType< NadaMaxUpdate > |

| using | OptimisticAdam = AdamType< OptimisticAdamUpdate > |

Detailed Description

Adam, AdaMax, AMSGrad, Nadam and Nadamax optimizers. Adam is an an algorithm for first-order gradient-based optimization of stochastic objective functions, based on adaptive estimates of lower-order moments. AdaMax is simply a variant of Adam based on the infinity norm. AMSGrad is another variant of Adam with guaranteed convergence. Nadam is another variant of Adam based on NAG. NadaMax is a variant for Nadam based on Infinity form.

mlpack is free software; you may redistribute it and/or modify it under the terms of the 3-clause BSD license. You should have received a copy of the 3-clause BSD license along with mlpack. If not, see http://www.opensource.org/licenses/BSD-3-Clause for more information.

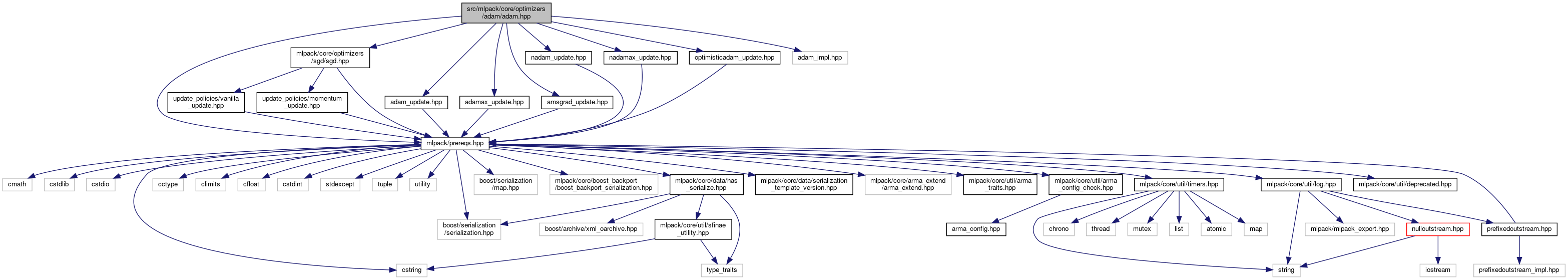

Definition in file adam.hpp.